AI-supported modeling: revolution in oral surgery

by Bertram Sabrowsky-Hirsch, MSc

The combination of artificial intelligence (AI) and medical image processing is revolutionizing oral surgery. By developing automated modelling methods, personalized patient models are created more efficiently and precisely. This enables improved diagnoses, customized treatments and faster surgical preparations.

- Automatic modeling of patients

- Pipeline-supported development of modeling methods

- Cooperation with CADS GmbH

- Source list

- Author

- Read more

Automatic modeling of patients

Patient-specific modelling has great potential in diagnosis and treatment and represents an important step towards personalized medicine. In oral surgery, 3D models of the examined anatomy provide an intuitive visualization option as well as the basis for surgical planning and the design of patient-specific implants. In practice, medical image data is processed by modeling experts using software tools based on medical image data – a time-consuming and costly process. Thanks to advances in AI-based annotation, comprehensive solutions are now technically feasible. However, their use outside of research work is still limited, as practical application is complicated by the time-consuming preparation of suitable data sets for training the methods. In a cooperation between RISC Software GmbH and CADS GmbH, the development of automatic modeling methods could be efficiently implemented through the use of an AI-supported pipeline.

Pipeline-supported development of modeling methods

As part of its research activities, the Medical Informatics unit at RISC Software GmbH is developing an AI-supported pipeline for modeling medical data sets. Unlike comparable technologies, such as the AI platform MONAI [1], this pipeline also supports aspects of method development starting with the inspection, selection and stratification of the database and allows the linking of any AI-based and classic image processing methods to processes that can be applied equally to individual patient data and complete data collections. Partial results, such as the training of AI methods or the calculation of atlas data sets, are just as independent processes as the final modeling method for integration into end applications and as such are data-agnostic. This approach leads to maximum reusability of the processes.

Figure 1: Thanks to the flexible modular concept of the pipeline, processing steps can be strung together as required and linked to form sequences. These in turn can be embedded as sub-steps in higher-level processes.

The pipeline has already been successfully used in a number of research projects, such as the automatic modeling of aneurysm patients for the MEDUSA surgical simulator [2]. In the projects, the pipeline enables the automated creation of complex patient models and thus supports the further visualization of the anatomy, the automated feature calculation, the printing of phantoms and the hybrid surgical simulation. By integrating modern AI methods such as the nnU-Net framework [3], the pipeline achieves state-of-the-art results.

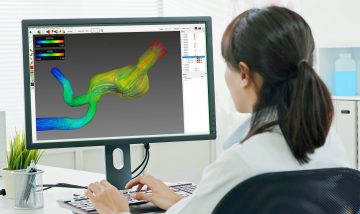

Figure 2: Modeling of aneurysm patients in the MEDUSA research project.

Figure 3: Modeling of facial and maxillofacial surgery patients from the cooperation with CADS GmbH.

Cooperation with CADS GmbH

As part of the collaboration, the AI-supported pipeline was used for the annotation process as well as the training and validation of AI methods. The annotation process in particular benefited from the automated preparation of image data for external service providers and the subsequent validation and integration of the annotations created into the database. Training, validation and statistical evaluation of the AI methods were also fully automated and can subsequently be easily applied to the growing database. The results were presented at the AAPR 2023 [4] and convinced the medical experts of the project partner. The pipeline was finally embedded in an annotation platform from CADS GmbH, which will enable medical experts to automatically generate patient-specific models from image data in the future. In addition, the pipeline also supports the ongoing expansion of the database with new annotations and the subsequent optimization of AI methods. This makes it possible to continuously improve the AI methods and include additional anatomical structures in the database. As part of the collaboration, the pipeline will be continuously expanded and used to model further anatomical structures. A particular focus is on the automatic evaluation and selection of image data according to quality criteria with regard to their suitability for modeling in order to make the annotation platform even more efficient for its users.

Figure 4: Examples of automatically modeled data sets from facial and maxillofacial surgery patients.

Source list

- Cardoso, M. Jorge, et al. “Monai: An open-source framework for deep learning in healthcare.” arXiv preprint arXiv:2211.02701 (2022).

- Medusa research project, medusa.health/en

- Isensee, Fabian, et al. “nnU-Net: a self-configuring method for deep learning-based biomedical image segmentation.” Nature methods 18.2 (2021): 203-211.

- Sabrowsky-Hirsch, B., et al. “Automatic Anatomical Annotation of CBCT Scans for Maxillofacial Prosthetics.” Proceedings of the Joint Austrian Computer Vision and Robotics Workshop 2023, Verlag der TU Graz, 2023

Contact us

Author

Bertram Sabrowsky-Hirsch, MSc

Researcher & Developer