Reengineering: Engineering solution shines through web porting

Why do engineering solutions also need a web version?

by Martin Hochstrasser MSc, DI (FH)Josef Jank and DI (FH) Alexander Leutgeb

A well-known proverb says: “Nothing is as constant as change” (Heraclitus of Ephesus). Of course, this also applies to software and its user interfaces in particular. These applications are increasingly shifting towards web technologies because they are available to a wide range of users on all platforms without installation. Successful desktop applications must also face up to this pressure to modernize, as otherwise they run the risk of no longer being used and thus losing the resources for further development. With older software systems, such new requirements often lead to a complete redevelopment of the software. Many see it as an opportunity to free themselves from legacy issues and switch to their preferred platform and programming language. In the initial euphoria, the effort and risk are often underestimated and many of these projects far exceed the initially assumed costs.

A well-considered overall strategy is therefore important for the technically and economically successful implementation of such projects. The aim should be a minimally invasive solution where only those parts are changed that are actually affected. The following applies to all other parts: “Never change a running system”. This avoids unnecessary effort and sources of error. Customers receive operational software at an early stage, can provide ongoing feedback based on this and thus steer further development in the right direction. In addition, the phase of parallel maintenance of the existing software and new development is kept short. Modernization or reengineering means that the software is technologically fit for the future and future investments can be better justified thanks to the potentially broader user base. This reengineering approach and an agile approach can keep costs low and achieve a good cost-benefit ratio.

Contents

- Why do engineering solutions also need a web version?

- Use case: Reengineering of the HOTINT desktop application for multi-body simulation

- Maximum customer benefit in just three months

- Possible solutions

- Implementation concept

- Technical realization and implementation

- Summary / Outlook

- Authors

Use case: Reengineering of the HOTINT desktop application for multi-body simulation

HOTINT is a free software package for modelling, simulation and optimization of mechatronic systems, especially flexible multi-body systems. It includes solvers for static, dynamic and modal analyses, a modular object-oriented C++ system framework, a comprehensive element library and a graphical user interface with tools for visualization and post-processing. HOTINT has been continuously developed for more than 25 years and is currently being further developed by the Linz Center of Mechatronics GmbH (LCM) as part of several scientific and industrial projects in both the open and closed source area. The focus today is on the modeling, simulation and optimization of complex mechatronic systems (including control systems), i.e. general mechanical systems in combination with electrical, magnetic or hydraulic components (e.g. sensors and actuators). Parameterized model setups can be implemented via the HOTINT scripting language or directly within the C++ modeling framework. Other important features are the support of interfaces, e.g. a versatile TCP/IP interface that enables coupling with other simulation tools (e.g. co-simulation with MATLAB/Simulink or coupling with a particle simulator for fluid-structure and particle-structure interaction analysis), as well as integration into the optimization framework SyMSpace. The software is realized as a C++ application and uses Microsoft’s MFC to implement the user interface. The OpenGL programming interface is used to visualize the 3D representation.

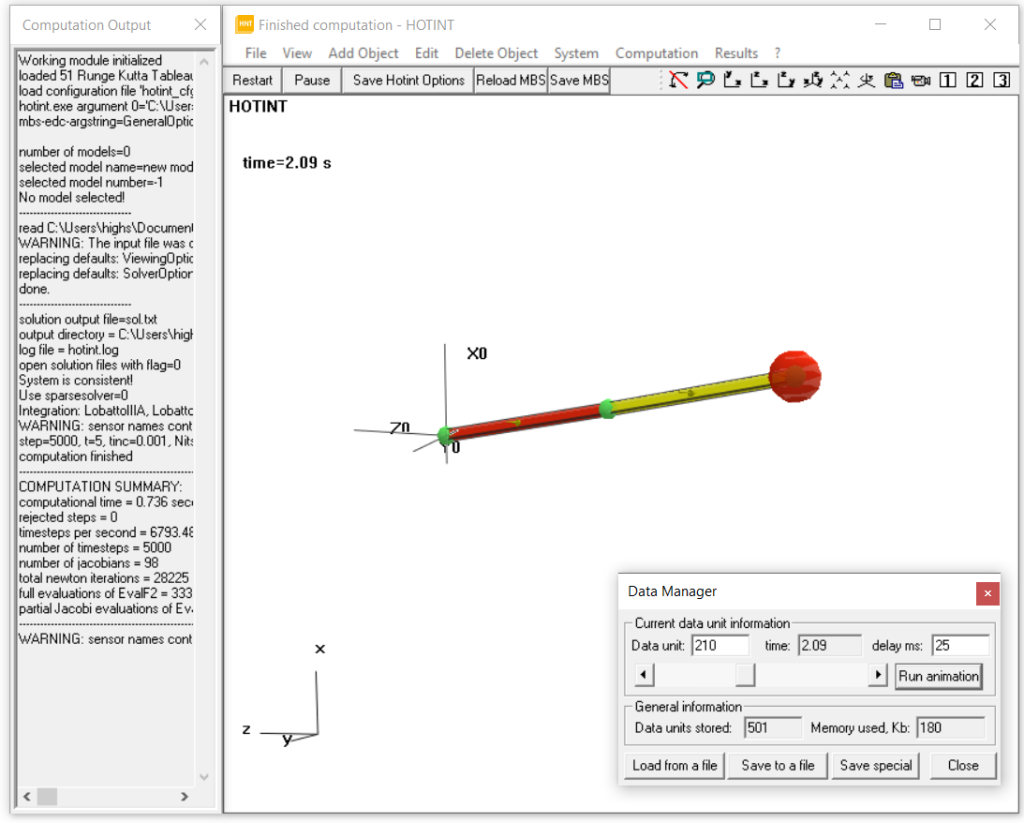

Screenshot 1: old desktop application HOTINT

Maximum customer benefit in just three months

The framework conditions and primary objectives were defined together with LCM during the initial meetings. In general, the overarching goal was to implement a web interface for the existing desktop application. However, it was relatively unclear to everyone involved whether the proposed solution ideas could be implemented within the planned period of 3 months, or whether unforeseeable limits or problems would be encountered. For this reason, the final results were not precisely defined, but the risk was limited by timeboxing (defined incremental product development with a fixed duration). The team’s task was to generate as much customer benefit as possible in the time available – ideally to make the current range of functions available in full in the web client. In line with the agile approach, the risk was also minimized through two-week sprints with joint reviews and the planning for the subsequent sprints based on these reviews. The first sprint of each phase had similarities to spikes, where the basic feasibility and effort for a technical story is determined. Based on this, the actual implementation was driven forward in the subsequent sprints.

In hindsight, this lightweight process worked well for the project , but this does not necessarily apply to other projects. If the customer has little experience in agility and software development, a lot more persuasion or a more formal process may of course be necessary. In the current case, the well-coordinated team already had many years of experience in the field of 3D visualization and was therefore able to make the right decisions based on their experience. This aspect is more important than a well-thought-out process (without wanting to minimize its importance), especially when it comes to technically demanding topics. For further information on this topic, we would like to refer you to our specialist articles Agile vs. classic software development, Software reengineering: When does the old system become a problem? and Modernization of software, as these approaches and ideas were also applied here.

Possible solutions

The starting point was a classic desktop application that was implemented in C++ based on the Microsoft Foundation Classes (MFC). OpenGL was used for the 3D display. The aim was to also make the application operable via a web browser. In principle, there are two options for porting the native HOTINT C++ application to a web application:

A pure client-side web application that would have to be completely re-implemented with web technologies without reusing any source code or alternatively a semi-automated porting of the C++ application into a web application using WebAssembly and Emscripts.

As a second approach, a client/server-based web application can be implemented, with HOTINT running as a C++ server and communicating with the client-side web application (communication protocol for structured data exchange: parameters, result data, 3D visualization, mouse interaction). The 3D visualizations can be transmitted either in the form of structured data (complete transmission or only of changed parts) or directly in the form of an image (image streaming).

A purely client-side web application was ruled out in advance, as a completely new implementation would have gone beyond the scope of the project. Semi-automated porting was not possible due to the use of closed-source libraries from Intel, Microsoft and others. Another option would have been to implement it on the basis of ParaView, as a client/server-based web application is already available there(link to ParaView web application). There are two options for 3D visualization:

- Purely client-side rendering of the 3D scene

- Server-side rendering of the 3D scene and transfer of the image to the client (image stream).

It is also possible to use both variants and perform an overlay in the client (hybrid 3D visualization). ParaView uses the VTK library for visualization. The necessary infrastructure for 3D visualization on the web based on a client/server architecture is already provided by VTK(link to vtkWeb). The 3D visualization for the client can be calculated purely on the server (image stream), purely on the client or on both (hybrid). The decision for a variant depends on the complexity of the 3D scene to be transferred and the associated data volume.

Implementation concept

For the chosen solution approach, in the form of a client/server architecture, the rendering of the 3D scenes had to be adapted. In the solution approach, the idea was to realize the client-side rendering exclusively with the help of the VTK library. In the course of implementation, however, it turned out that the internal rendering interface could not be converted to an abstract description for VTK without great effort. Since the simulation kernel updates the 3D visualization at every time step during the animation, mapping to a different rendering API would have been associated with performance losses.

The following procedure was therefore chosen: The use of OpenGL was retained unchanged, but instead of the output on the screen, an image is generated (off-screen rendering) and transferred from the server to the client. This approach offers the following advantages:

- Basic rendering in HOTINT is not subject to any major changes.

- Extensions and adjustments to the HOTINT element routines can be carried out in the usual way.

- The changes only affect the new web backend itself, which means that the maintainability of HOTINT remains unchanged.

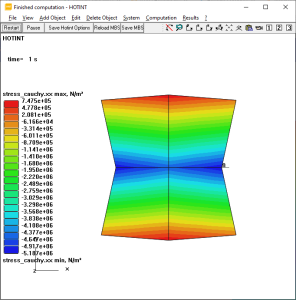

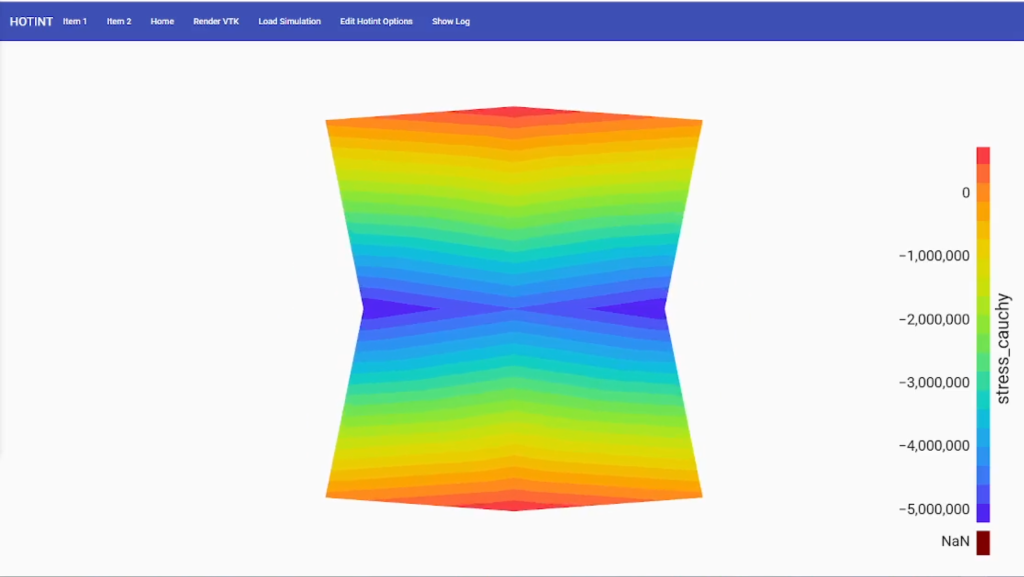

As a purely image-based transfer of 3D views was not expedient for all applications due to the communication bandwidth, the transfer of complete models was designed for certain applications. In the case of FEM (Finite Element Method) models including calculation results, these are only transferred once from the server to the client in the form of a VTK scene description. This means that the 3D view can be changed interactively in the client and the visualization of different result variables can be selected without having to transfer data from the server again.

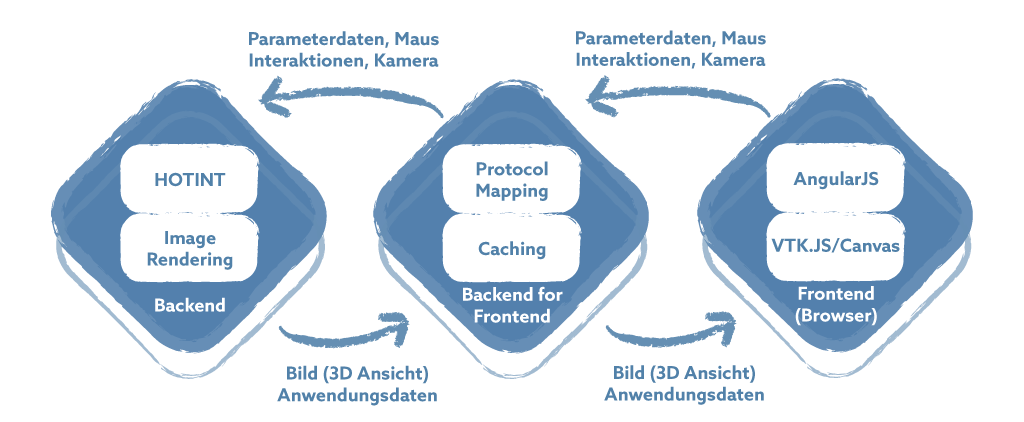

A backend-for-frontend (BFF) based on Node.js was implemented to connect the web clients. This approach was chosen because the integration of a complete web server would have been contradictory to the minimally invasive adaptation of the existing application and Node.js also offers good support for gRPC and GraphQL. The backend makes the data available to the BFF in generic form via gRPC and this in turn delivers the data to the frontend via GraphQL. The BFF is responsible for mapping the various protocols and can also implement intelligent caching mechanisms. By using the BFF, the extensions in the backend (HOTINT) can be kept to a minimum. The following figure shows the implementation concept.

Figure 1: Implementation concept

Technical realization and implementation

New findings in the course of implementation could have necessitated changes and adjustments to the planned implementation concept. Surprisingly, this was not necessary in this specific case – the concept could be implemented as planned without any major deviations. In HOTINT , only the following changes were ultimately necessary:

- OpenGL Off-Screen Rendering

- Export / import of parameter settings as JSON

- Extension by VTK scene generation for visualization of FEM calculation results

The previous render code with OpenGL did not have to be adapted. The code base of HOTINT has a scope of approximately 200,000 LOC (Lines of Code) with ~300 classes. The HOTINTWeb extension has approx. 2,000 LOC, while the adaptations to HOTINT were very small at ~500 LOC (0.25 %).

The backend was implemented with gRPC as planned. The individual images for the image-based transmission of the 3D display are created with off-screen rendering. The GLFW, glbinding and glm libraries were used for this. In order to reduce the data volume when transferring the individual images, they were compressed to JPG images using TurboJPEG.

The backend-for-frontend (BFF) was developed from scratch using Node.js. gRPC-JS was used for communication with the backend / HOTINT. The Apollo Server was used as the GraphQL framework, with GraphQL Mutations being used to control the simulation and GraphQL Subscriptions being used to transfer the images / messages.

Angular was used for the front end in combination with Material Design. For interaction with the 3D view, vtk.js is also used for image transfer – with the advantage that camera interactions do not have to be re-implemented manually.

Interaction with the simulation roughly corresponds to the following process:

- Preparing the simulation environment (selecting the project file).

- Start the simulation run.

- During the simulation run , HOTINT continuously generates new time steps and messages. These are communicated to the front end with the help of the back end and the BFF.

- The front end decides when new screens are required or when picking (selection of elements) should be started. It is also possible to fall back on previous time steps at any time.

- Completing or stopping the simulation.

The following illustration compares the previous desktop application and the new web application:

Screenshot 1: old desktop application

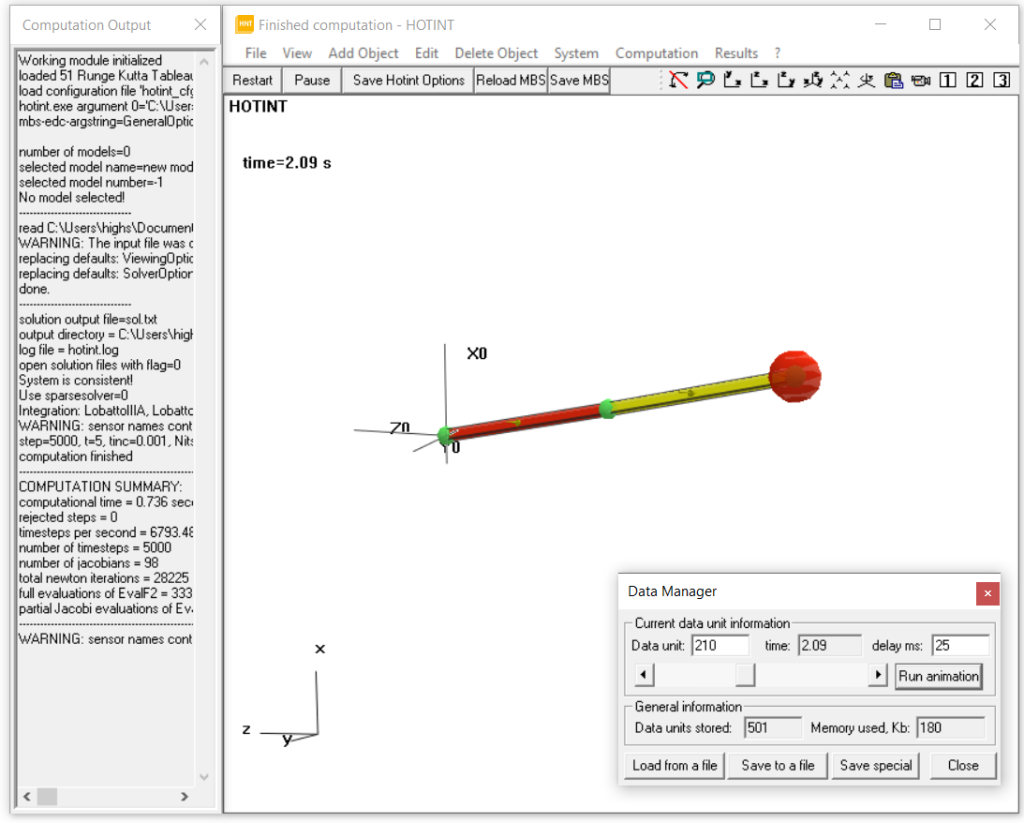

Screenshot 2: new web version

Screenshot 1: old desktop application

Screenshot 2: new web version

Summary / Outlook

As mentioned at the beginning, the majority of user interfaces today are realized with web technologies because they enable low-threshold access for a wide range of users. Traditional desktop applications are now also confronted with this pressure of expectation. In this context, the question of an appropriate approach arises: complete new development or gradual modernization (reengineering). Of course, the decision must be made individually for each project and depends heavily on the scope and quality of the existing code base. However, it is not the case that a new development is generally the better solution. In the project presented here, the minimally invasive reengineering approach and the agile approach have proven their worth. Expectations in terms of results and effort were even exceeded, as the project was completed within a lead time of around three months and with around 500 person hours.

All in all, the following factors were decisive for our success:

- The agile approach and regular joint coordination of interim results and the next steps.

- The chosen minimally invasive approach.

- Future-proof, because existing code and extensions can be maintained and further developed independently of each other.

Authors

Martin Hochstrasser, MSc

Software Developer

DI (FH) Josef Jank, MSc

Senior Software Architect & Project Manager

DI (FH) Alexander Leutgeb

Head of Unit Industrial Software Applications