Analyze contracts using AI

How LLMs are revolutionizing everyday B2B life

Contract negotiations tie up a lot of time and resources in companies. Today, large language models analyze and compare contracts automatically and can be operated with currently available solutions on local infrastructure.

by DI (FH) Stephan Leitner

Contents

- From raw text to structured analysis: what happens before AI

- RAG instead of installments: The solution for long contracts

- Embeddings: Precision in contract comparison

- Controlling hallucinations: Trust with limits

- On-premises instead of cloud: control over the infrastructure is crucial

- Integration into existing systems: The next step towards a company-wide solution

- Conclusion

- Author

- Contact us

A new 120-page framework agreement, a short review period, an overworked legal team. This scenario is omnipresent in the B2B sector. Large Language Models (LLMs) can fundamentally change this process, provided the technical implementation is right. Those who understand the technical fundamentals will make more informed decisions – when it comes to infrastructure, risk assessment and building a sustainable solution for contract analysis.

From raw text to structured analysis: what happens before AI

Before an LLM can process a contract, it must be available in a suitable format. In practice, contracts are often only available as scanned PDFs or poorly structured Word documents. For an AI, scanned PDFs are just images without machine-readable text.

Optical Character Recognition (OCR) is used here, which recognizes characters in images and converts them into machine-readable text. Modern OCR solutions achieve high recognition rates, but errors can still occur with poor scan quality, handwritten annotations or complex tables.

Pre-processing is therefore an often underestimated part of the system. This includes the correction of OCR errors, the recognition of document structure (sections, clauses, attachments), the processing of tables and images and the normalization of date formats or currency specifications.

RAG instead of installments: The solution for long contracts

A central technical limit of LLMs is the context window. This is the maximum amount of text that a model can process per request. Current models can handle significantly more than previous generations, but extensive contracts with annexes, service specifications and framework agreements quickly exceed this limit if you try to process everything in a single query.

The solution is called Retrieval Augmented Generation (RAG). The principle is elegant: the entire contract is stored in small sections (chunks) in a vector database. Whena userasks a question, the system first searches for the relevant text passages and transfers them to the LLM with the question. The model then answers only on the basis of these passages, not from its general training knowledge.

This has two decisive advantages. Firstly, the approach scales: the document length no longer plays a role because only relevant sections are ever loaded. Secondly, the answer is comprehensible: Every statement in the LLM can be traced back directly to a specific passage in the original document.

In practice, many systems combine RAG with a hierarchical document structure: clauses are not only saved as continuous text, but are also provided with metadata – such as clause type, contracting party or validity date. This makes targeted queries such as “Show all clauses on the contract term from supplier contracts from 2023” possible in the first place.

Embeddings: Precision in contract comparison

One use case in which LLMs provide particularly efficient support is the comparison of contracts. Deviations from an internal standard can be identified, as can changes made to a contract by a contractual partner during negotiations.

A simple text search quickly reaches its limits here. It finds identical words, but no similarities in content. A clause that limits liability to “direct damages” and another that “excludes consequential damages” have the same meaning in terms of content, but look completely different in terms of text.

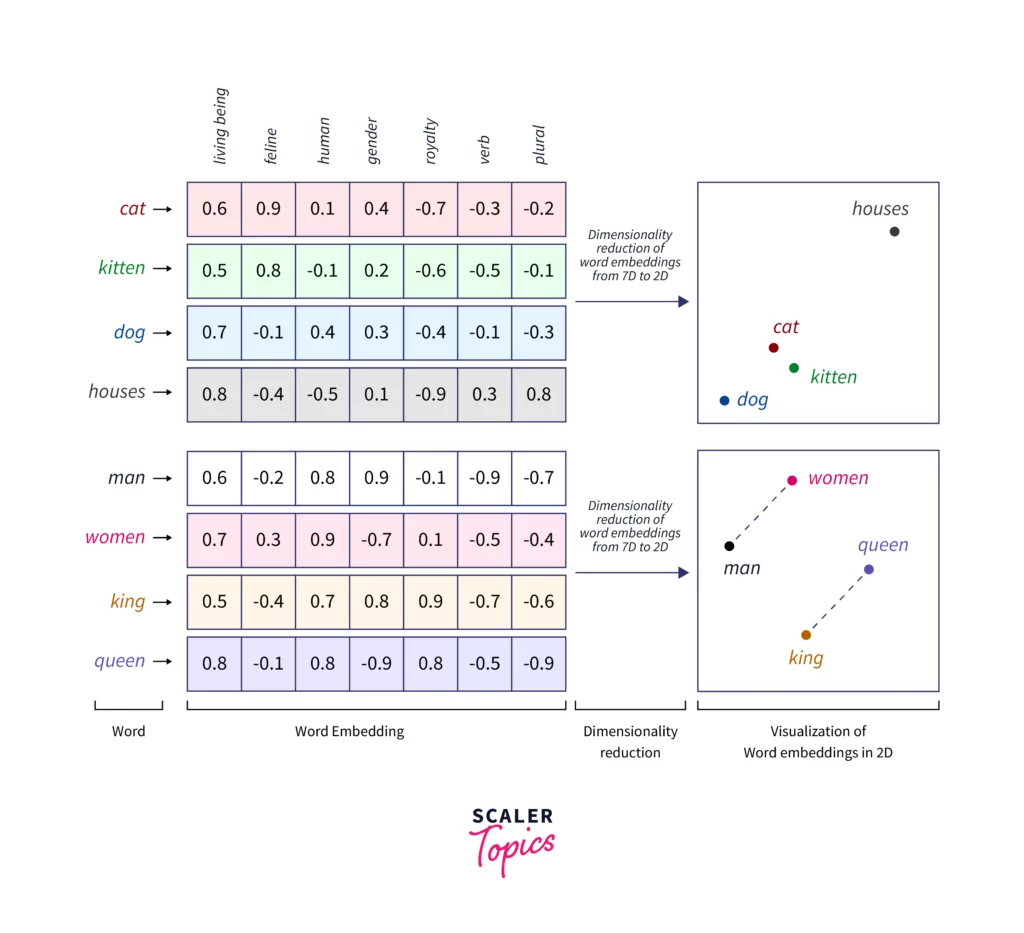

Embeddings solve this problem. Sections of text are converted into high-dimensional mathematical vectors. Similar content lies close together in the vector space, regardless of the exact wording. The system thus recognizes semantic similarities and specifically identifies divergent clauses.

Image source: https://www.scaler.com/topics/tensorflow/tensorflow-word-embeddings/

Controlling hallucinations: Trust with limits

Hallucinations are a well-known problem with LLMs. The model produces statements that sound plausible but are factually incorrect. Such errors are particularly critical in the contractual context. An incorrectly summarized warranty clause or an overlooked exemption from liability can have considerable financial consequences.

Professional systems counter this risk with several measures. The most important is transparency: every statement made by the AI is backed up with the exact original passage from the RAG process from which it was derived. Users can click to jump to the source and check the statement manually.

Additional measures are also used. Prompt engineering explicitly instructs the model to only answer when it is certain, to provide sample answers and to openly admit when it does not know something. Fine-tuning refers to the further training of a pre-trained model on a specialized data set, such as specialist legal texts, sample contracts or annotated decisions, in order to improve the quality for the specific use case. Guardrails add an additional layer of security: a downstream agent checks the answers, automated tools recognize typical hallucination patterns, and fact checks ensure consistency with the source documents. Advanced systems also use multi-step reasoning, where the model proceeds step by step instead of directly generating an answer.

Despite all these measures, AI cannot replace legal expertise. It reduces manual effort and gives legal teams more time for the really important decisions.

On-premises instead of cloud: control over the infrastructure is crucial

Contracts contain sensitive business information – price conditions, delivery quantities, exclusive agreements. Transferring such documents to external cloud services means a loss of control over critical company data. For many companies, this is not an acceptable option.

Operating an LLM on your own resources (on-premises) solves this problem. The data does not leave the company network. There is no dependency on external services, no unclear contractual conditions for data transfer and no questions about the use of input data for model training.

The technical basis for this is provided by powerful open source models. Models such as Mistral, Qwen and Gemma can be operated entirely on your own resources. Suitable systems are now available from around EUR 5,000, although at this price range, runtime and throughput must be reduced for parallel use. Solutions that provide enough resources for most SME requirements are available from around EUR 50,000.

By integrating company-specific contract data, the quality of these models can be significantly improved and optimized for the specific use case. On-premises operation also enables full control over model versions; updates are only imported after internal review and approval.

Integration into existing systems: The next step towards a company-wide solution

The technical core function of an LLM, which reads, evaluates and compares contracts, can be provided and purchased as a stand-alone product. The next step is the structured roll-out of this contract LLM into the existing company infrastructure.

Many companies already use contract management systems. An AI solution that runs as an isolated solution alongside these systems creates media disruptions and is hardly used on a day-to-day basis. Seamless integration via standardized interfaces is therefore crucial. With on-premises operation, the company has complete control over this interface. Adaptations, extensions and security updates can be implemented without external coordination.

A well thought-out roles and rights concept is also essential. Not every employeeshould be able to view all contracts. The AI solution must respect existing access rights and be integrated into the company’s identity management – this is also easier and more secure to implement on-premises than in a shared cloud environment.

Conclusion

AI-supported contract analysis is now ready for production. LLMs, combined with RAG, domain-specific embedding models and controlled output, solve the key challenges: long documents, semantic comparison and traceability.

Those who opt for on-premises operation gain full control over models, data and infrastructure in addition to data protection. It also creates a basis that can be adapted to changing requirements without dependence on external providers. Companies that lay these foundations today create a scalable and sustainable solution that is tailored precisely to their own requirements.

Ansprechperson

Author

DI (FH) Stephan Leitner

Head of Unit Domain-specific Applications